Artificial intelligence is so mainstream it’s, in some cases, completely invisible.

From routine answers to intricate solutions, AI helps many navigate work — and for human resource professionals, AI is taking over numerous tasks, from the mundane to the complex. But while many recruiters say they use some form of AI technology, what does that really mean? And what does it look like?

How we’re already using AI

In the Littler Annual Employer Survey 49% of 1,000 respondents cited hiring and recruitment as their top use of AI. Thirty-one percent are using it to guide strategy and management decisions; 24% to analyze workplace policies; and 22% are using it to automate tasks formerly done by humans. A small group, 5%, are even using AI to guide litigation strategy.

AI does more than screen resumes for pre-determined data points; it can rank candidates against the job requirements, freeing recruiters up to call in the top matches first. Chatbots are initiating the hiring cycle, asking questions and moving candidates along in the pipeline when their answers meet requirements and, when not, steering them to other positions or politely declining.

To eliminate bias in job postings, AI can analyze job descriptions for words that skew for or against protected classes. On the flip side, AI can block data suggestive of gender, ethnicity and more on resumes. Recruiters are presented with skills and experience from which to choose interviewees, potentially removing even unconscious bias from the selection process.

When is a company ready for AI?

If your applicant stream is homogenous, AI can help. If time to hire is too lengthy, AI can be invaluable. “While 72% of companies see AI, robotics, and automation as important,” Christa Manning, vice president and solution provider research leader at Bersin, Deloitte Consulting LLP told HR Dive via email, “only 31% feel ready to navigate changes.”

But AI can be a differentiator for the most mature talent acquisition functions, said Manning. According to Bersin’s recently published Six Key Insights to Put Talent Acquisition at the Center of Business Strategy and Execution, high-performing talent acquisitions functions are four times more likely to embed advanced technologies such as cognitive tools and AI.

"Tools like AI and analytics work best," Aaron Crews, chief data analytics officer at Littler, told HR Dive, "when they’re being deployed to solve a specific identified problem. If you’re spending money on solutions that aren’t working, it may be time to use those funds on solutions that will."

Make sure your company is prepared for what could come next, he said in an interview. AI-enabled tech is powerful, but it will give you exactly what you request; if you put in biased information, it will give you biased results. When you build the model of what “kinds” of employees you’re seeking, for example, you need to base your input on objective criteria, such as competencies needed, education and years of experience. It will be critically important to weed out any data that could skew for one group over another. For new users, it might be a good idea to manually verify who the tech is screening out (or not) to assure they’re being removed for lack of qualifications aligned with your specs.

Going pro

For most employers, an outside vendor may be the first foray into AI. They’ve already invented the wheel, so to speak, so the technology should be proven. But if you have the resources to create your own algorithms, make sure you have expert help to navigate pitfalls that may be difficult to predict.

If you use an external consultant, Crews warned, be prepared to ask a lot of questions. “Get to the point of being tedious,” he said. “You want to know how it works, what are the outputs, how is the data verified.” Most vendors will not reveal the ingredients of their “secret sauce,” but if they can’t explain in generic terms exactly what attributes the tech is looking for and what it’s not, an employer should ask the vendor to indemnify them from harm if the results go south. If they won’t, find one who does.

Early adopters of the tech may bring in false positives and negatives: it grows and learns the more it’s used and the more data it has to cull from. Good questions to ask: Who owns the data? If it doesn’t belong to you, is it being used for any other purpose? Additionally, ask what data is retained in the event of a claim — incredibly relevant in light of the General Data Protection Regulation.

If a company opts to work with an outside vendor, make sure they can show that their tech has been validated and for what purpose, Mark Girouard, shareholder at Nilan Johnson Lewis, told HR Dive in an interview. “If the tool they’re using screens well for retail jobs, and that’s what you’re hiring, it should be valid,” he said. If the jobs aren’t similar, it may not be as successful. A good adoption of AI may be for highly specialized jobs, he suggests. Rather than a lengthy, perhaps costly, hiring process, AI can hone in on smaller populations with specific skills.

In addition to using AI for recruiting and hiring, some companies are using games that uncover personality traits similar to psychometric testing. “The candidate feels as though they’re playing a video game, but employers are learning about specific skills as they play,” Girouard said. Be confident the game correlates to the vacancy. “If it’s clear how the game relates to the job, for example, dealing with customers, the risk of screening inappropriately may be reduced," he added.

Hidden risks…

Even though employers are working with emerging tech, old rules still apply, Crews warned. No part of the selection process can result in adverse impact against a protected group.

One of the biggest risks surrounding AI is that experts don’t know what it’s learning or why, Girouard said. “While HR is accustomed to validation requirements, data scientists are not as well versed.” Their position is the tech works because of the science, but the U.S. Equal Employment Opportunity Commission is watching closely to see if the science is screening inappropriately.

“AI is only as good as the data set it is trained on," Manning said, “and its success relies on both the quality and quantity of data.” The history of the data also plays a role here. To become more accurate, AI must be trained by receiving feedback over time. “In order to see the best results,” she added, “companies will have to commit to understanding how AI works and invest the time to apply it accurately.”

…and rewards

AI can solve problems you didn't even know you had. For example, one of the problems veterans face is that military speak doesn’t translate well to common American English, but algorithms can bridge the language gap. They can translate rank into skill or experience levels, for example, helping increase veteran hiring.

Another use of the tech, Crews said, is mining information you already have. Your staff is a wealth of data. A bank in Spain mapped experience and leadership characteristics of their high performing employees. Using the data in anonymized form, it created skill maps and internal paths to develop other staff members, he explained.

AI can also mine resume banks of past applicants, honing in on those who missed out on the last round, but have the skills for something upcoming.

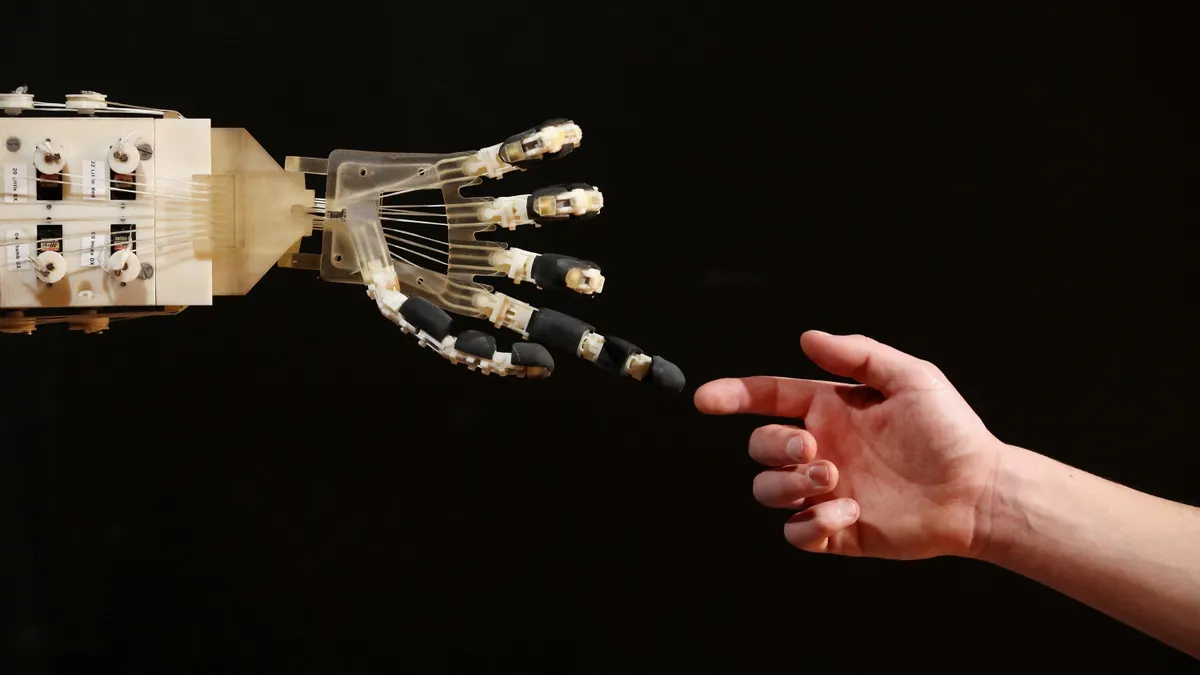

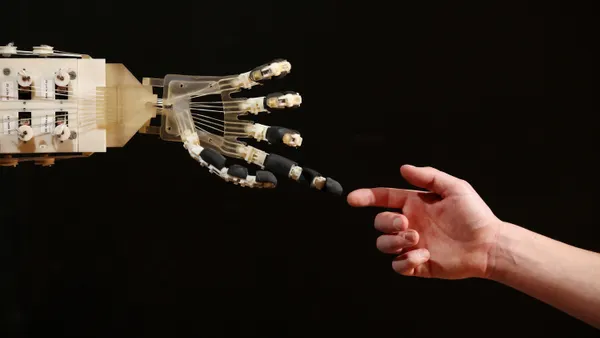

Girouard predicts employers will soon see AI touch every aspect of the business and the lives of its employees — but that understanding of AI may take a while to catch up. “It will be a challenge to find a comfort level,” he said, “with tools making decisions for us without our truly understanding how they’re doing it.”